How to Rank on Google Part 3

How to Rank on Google Part 3: Site HTML Markup

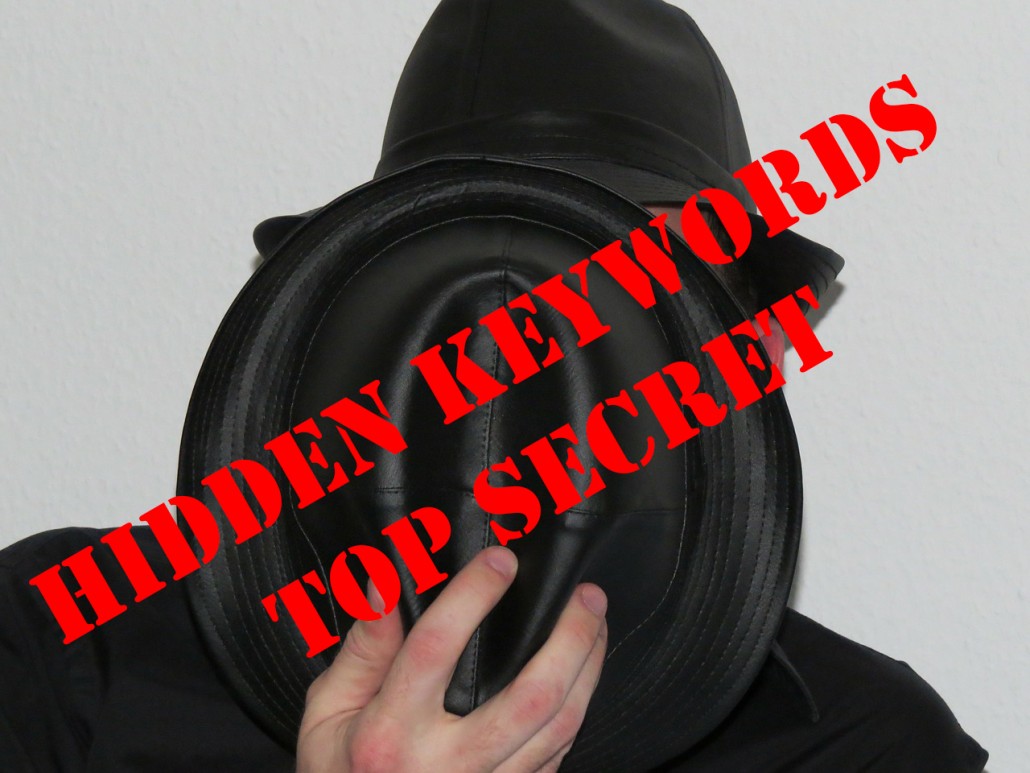

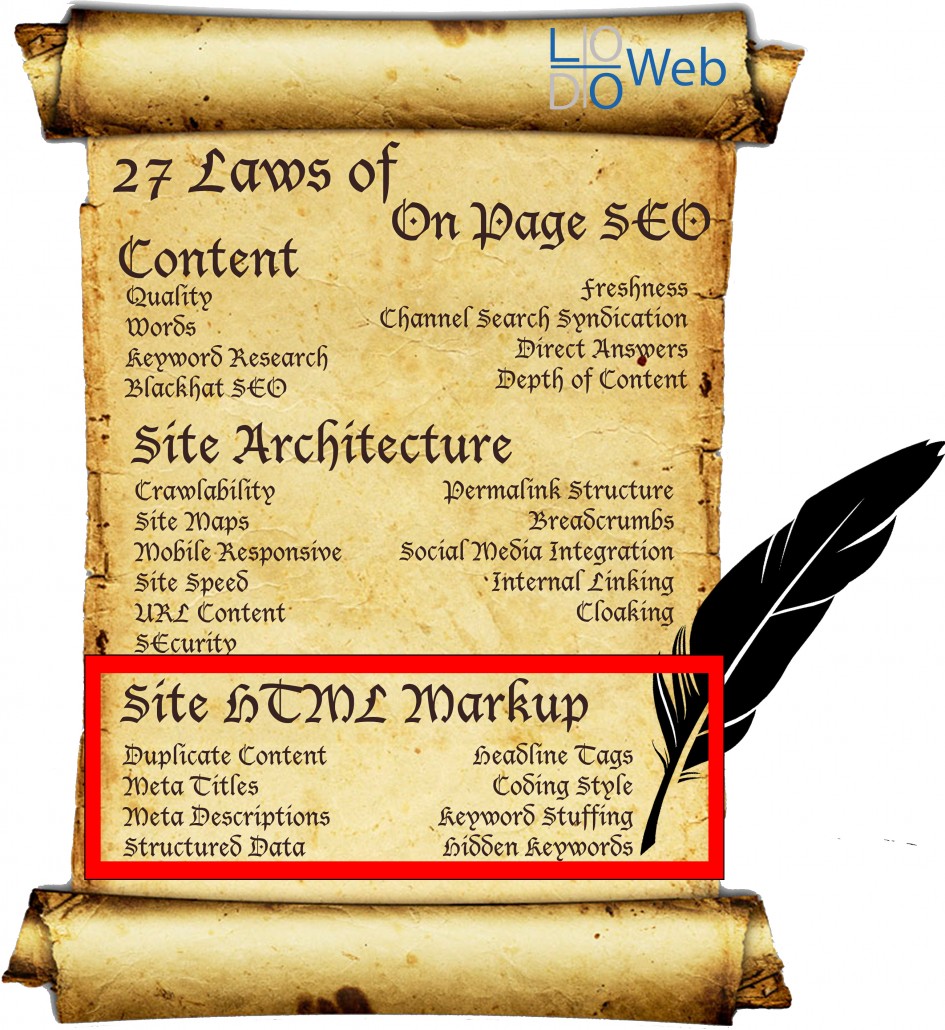

Laws 20-27 of 27 Laws of On Page SEO

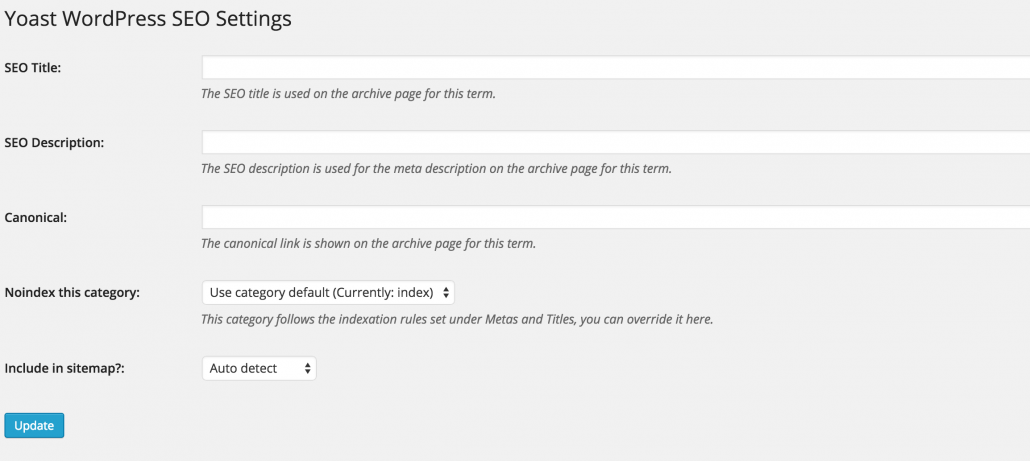

You finally made it. You’ve optimized your content, and your site architecture. Isn’t that enough? Wouldn’t you say, that’s enough to appease the SEO Gods? Aren’t the crawlers satisfied with your effort? Well, no. They aren’t because now they need to take a look at your actual HTML code and see what is going on with your code. You see, the crawlers don’t see the beautiful text that you see here. What they are looking at is this:

This ugly. Confusing. Organized mess we call code. This code is what the search engines see when they crawl your site. It is up to you to make it make sense for the search engines to understand. This last part of the 3 part series is perhaps the most technical, but do not worry, I’ll go over each line one by one so that you understand it, even if you don’t know how to code.

The last tutorial went over your site architecture. We learned how to structure your site so it makes sense to the crawlers and search engines, and also how to increase your site usability and functionality. As you should know by now, Google wants to see a hierarchical and coherent structure on your site. This is especially important for e-commerce. This time, we are diving into the code and the actual HTML markup. Take a deep breath. It’s going to be OK. Let’s dive right in.

Part 3

LAWS 20-27 – SITE HTML MARKUP

LAW 20: DUPLICATE CONTENT

I briefly talked about duplicate content in How to Rank on Google Part 1. Duplicate content can be a huge problem on the web and is to be avoided at all times. Unless you do it right. There are ways to present duplicate content in Google that gives the right attribution to the right source.

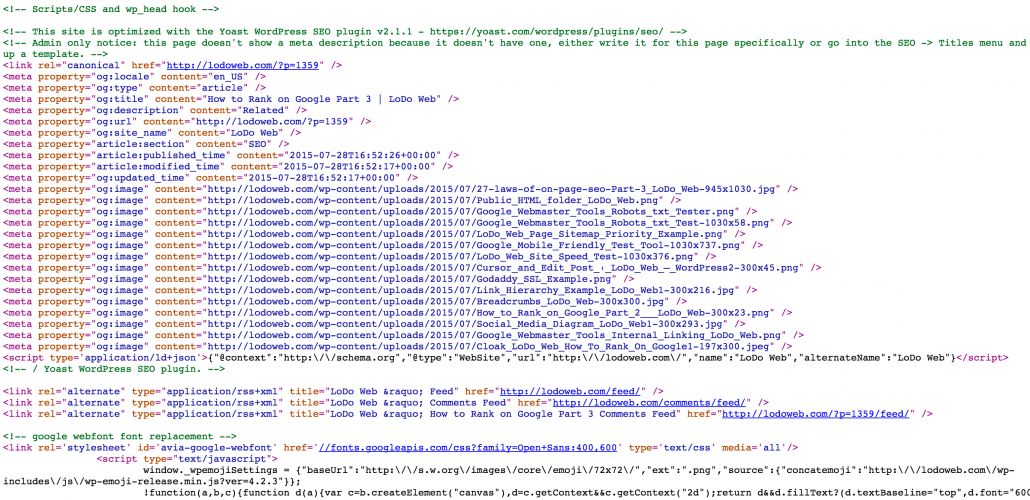

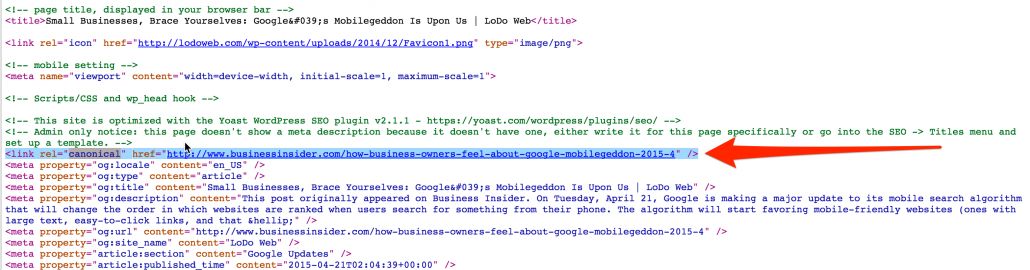

There is an appropriate way of providing duplicate content if you would like to do so. This is without any penalties. You can utilize something called canonical linking to attribute the content to the right author. Not only should you attribute the author in the title, but also do so in the code where Google bots can see it.

There is an appropriate way of providing duplicate content if you would like to do so. This is without any penalties. You can utilize something called canonical linking to attribute the content to the right author. Not only should you attribute the author in the title, but also do so in the code where Google bots can see it.

The code you must use:

<link rel=”canonical” href=”[ORIGINAL-SITE]” />

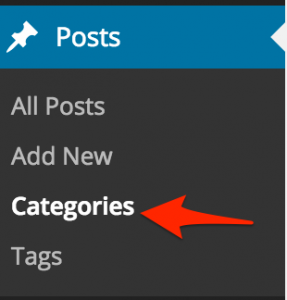

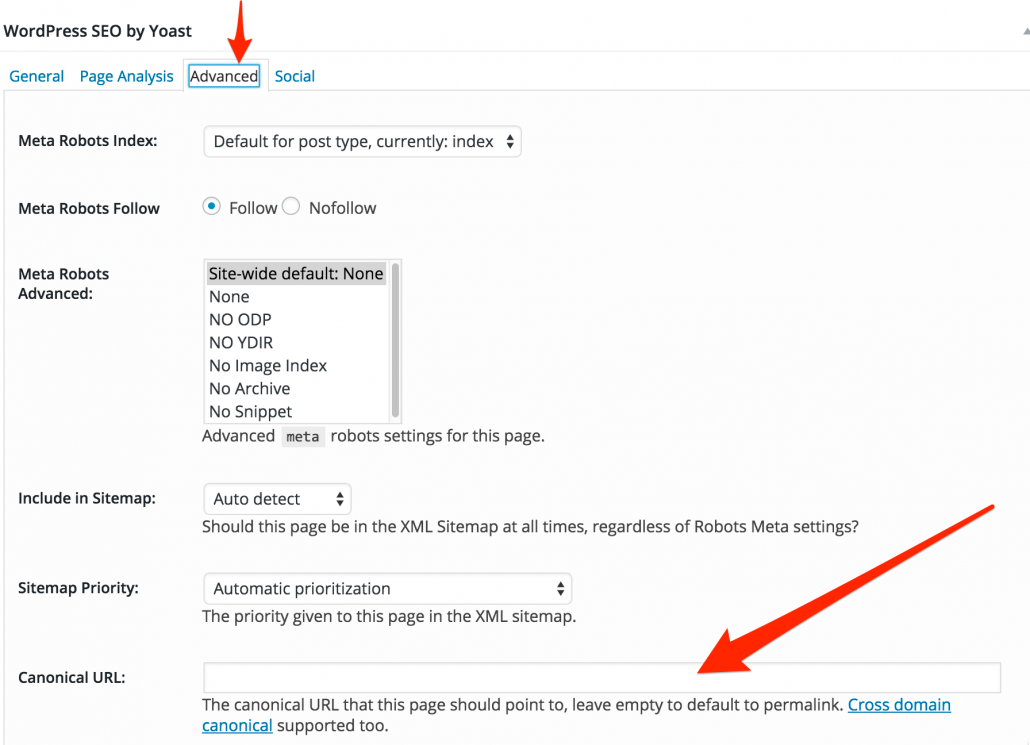

This code should go above your tag on your website. If you are on WordPress and are using Yoast’s SEO plugin, you can navigate to the Advanced tab and the Canonical URL can be set there.

This code should go above your tag on your website. If you are on WordPress and are using Yoast’s SEO plugin, you can navigate to the Advanced tab and the Canonical URL can be set there.

Replace “[ORIGINAL-SITE]” with the original site owner or author. Link directly back to the article to avoid any duplicate content penalties. This will tell Google that you didn’t create the article/site and your providing the right attribution to the original author. It’s all about the kudos here. Make sure you give people the attribution they deserve for writing an article and your using it. It’s common courtesy and will avoid any Panda violations. I’ll be going into more depth on duplicate content in the next chapter of our On Page SEO Tour.

LAW 21: META TITLES

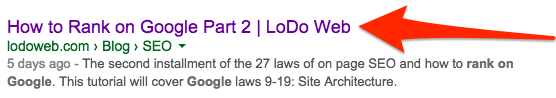

Remember Meta Keywords? Who uses those anymore? More importantly, what search engine even pays attention to this anymore. The answer is none. No search engines pay attention to meta keywords, but meta titles…These little gems give you full control over how your content is published and appears in Google. They are closely tied to Meta Descriptions, which will be the next Law. Meta titles are the title of your page that exists in the code. This shows up in the SERP.

This title is must be reflected in the actual code of your page.

![]()

Meta titles allow the search engines to identify what your page is actually about and this is inserted below the tag of your site.

The Code:

<title>YOUR-META-TITLE</title>

Simply put, without forcing the rewrite of the titles on your page, the search engines will pull information from your site and essentially insert whatever they feel is best. Inserting your researched keyword into your meta title is the point of having control of the titles. The search engines need to know what keyword you are trying to rank on.

LAW 22: META DESCRIPTIONS

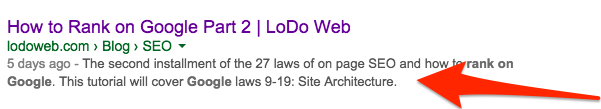

Like meta titles, meta descriptions are also in your control. You have 156 Characters to showcase what your page is about. If you go over, Google will simply insert ellipses and cut off the remainder of your description.

The Code:

<meta name=”description” content=”YOUR-DESCRIPTION” />

The code needs to be inserted below the tag of the page. Including the keyword you are trying to rank on in the Meta Description will help Google identify the target keyword of your post.

![]()

Remember to always include the researched keyword in your meta description.

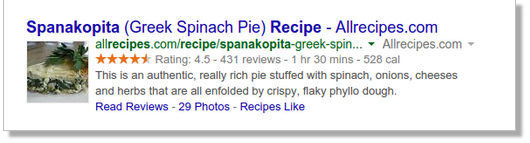

LAW 23: STRUCTURED DATA

Structured data is simply put, the language of the search engines. What can they extract from your site and insert it alongside your SERP?  This helps search engines clearly understand what your page is about and helps insert even more rich information in the SERP. This is most commonly called a “Rich Snippet”. Basically, it’s a search engine that can include rich information, like reviews, comments, ratings, or even specific data about that particular article. For example, if you own a company and have Google Reviews, you can insert these Google Reviews into your site and include them into your SERP. The most common one you see is those rating stars.

This helps search engines clearly understand what your page is about and helps insert even more rich information in the SERP. This is most commonly called a “Rich Snippet”. Basically, it’s a search engine that can include rich information, like reviews, comments, ratings, or even specific data about that particular article. For example, if you own a company and have Google Reviews, you can insert these Google Reviews into your site and include them into your SERP. The most common one you see is those rating stars.

This helps users quickly decipher if they want to click on the page or not, based on the information provided in the SERP. How many times have you decided not to click on something because of reviews or something of that sort? This isn’t a direct ranking factor, but does help users with identifying whether or not they want to click on certain results versus others.

You can use Google’s Webmaster Tools platform to help highlight structured data on your site. Clearly this is something search engines are encouraging webmasters, both advanced and novice alike, to embrace.

LAW 24: HEADLINE TAGS

Everybody has this in their SEO arsenal. SEO isn’t just about writing high quality content. It’s also abut using the right tags so search engines can quickly crawl the page and identify exactly what keywords are present. Every page has H1-6 tags at their disposal.

Everybody has this in their SEO arsenal. SEO isn’t just about writing high quality content. It’s also abut using the right tags so search engines can quickly crawl the page and identify exactly what keywords are present. Every page has H1-6 tags at their disposal.

These tags are very easy to implement:

<h1>YOUR CONTENT HERE</h1>

You can use H1 through H6 tags and each one in between suggests a lower and lower keyword ranking option. The H1 tag carries the most weight and should have the selected keyword in your title. As you go further and further down to H6, the titles get more and more specific based on the broad keyword you are trying to rank on. Here you can use alternative keywords that are similar but different. In other words you can use synonyms to diversify what you are saying.

This helps content on two fronts. One, it helps you keep your own content organized. Two, it helps keep the code organized for the search engines. Think of it as a hierarchy of H Tags.

LAW 25: CODING STYLE

A clean and light coding style is important when ranking on Google. What is essentially meant by coding style is not to overcomplicate your code with needless HTML. The goal is to keep it light, yet still effective. This means not using the H Tags more than once in each page, and also keeping your use of HTML and Javascripts to a minimum. CSS loads faster and generally is the preferred way of handling text colors, sizes and other elements globally.

Overusing HTML styles and inserting them all over your page makes it very difficult for the search engines to crawl. Use CSS instead of HTML and affect your styling globally. Keep your HTML simple and clean without over using common HTML elements. Simply put, you want to have professional, clean code.

This is also reflective in the themes that you are using in WordPress. Some themes have notoriously heavier code and scripts than others, so you want to ensure that you are choosing something that has been vetted for SEO and ranking. Using a one-off theme with limited reviews can affect your SEO negatively because it just doesn’t load efficiently. Remember, crawlers won’t stay on a page too long, especially if it doesn’t load quickly.

Plugins also can overload your HTML and cause things to just not function properly on your site. Limit the use of plugins on your site so that it stays up to the correct code. Only use themes and plugins that are reputable and have a lot of reviews.

Overall, you want to use the new HTML5/CSS3 markup languages so that you have modern code. A lot has changed since the original HTML markup language has been released and it’s your job, as a developer, to stay up to code. Keeping light code, without too many scripts loading will keep your site up to standards.

LAW 26: KEYWORD STUFFING

Back in the days of easy SEO, you can simply stuff your keywords into your content and hope for the best. Search engines used to use meta keywords and crawl the content for the relevant keywords and voilà, you are on page 1. Those days are long gone and it is going to affect you very negatively if you repeat the same keyword over and over.

Back in the days of easy SEO, you can simply stuff your keywords into your content and hope for the best. Search engines used to use meta keywords and crawl the content for the relevant keywords and voilà, you are on page 1. Those days are long gone and it is going to affect you very negatively if you repeat the same keyword over and over.

Instead of repeating the same keyword constantly, repeat the keyword in different forms using similar phrases. It sounds difficult, but comes back to a natural writing style. Using a natural writing style, you naturally will include like phrases into your content. Avoid stuffing at all costs and repeating non-relevant keywords in your copy. This will indicate that you are using stuffing techniques that will negatively affect your rank. Nowadays this is considered blackhat SEO and will not help you rank at all. Instead, it will most likely hurt your rank as the search engines are no longer searching for just keywords in your content.

LAW 27: HIDDEN KEYWORDS

Like cloaking, hidden keywords are not going to help you. Essentially this is like keyword stuffing, but either hiding the keywords in the HTML code or using a transparent color and hiding it on the page itself.

Like cloaking, hidden keywords are not going to help you. Essentially this is like keyword stuffing, but either hiding the keywords in the HTML code or using a transparent color and hiding it on the page itself.

When throwing in a bunch of random keywords designed to fool the search engines, you’ll only end up fooling yourself. No matter how well you hide the keywords in your content or pages, whether it be in files, code, HTML, or CSS, will only impact you negatively as the search engines are a lot smarter than you. Google will know if your content is relevant to what the topic is of the page. This is considered a very blackhat technique and if you get caught using hidden keywords, either in your meta information or directly, you will eventually get caught and the rank you will receive will be temporary at best. You may get blacklisted at the worst.

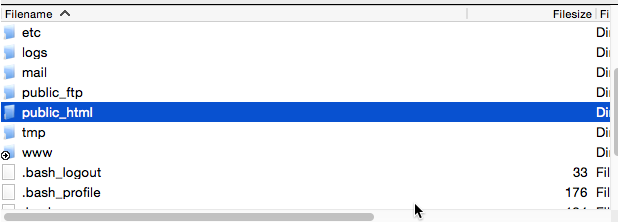

Upload this to the ROOT of your server directory. This should be in the “public_html” folder. You’ll notice that this file is nearly blank. This is intentional. To see a different version, with some blocked content see LoDo Web’s

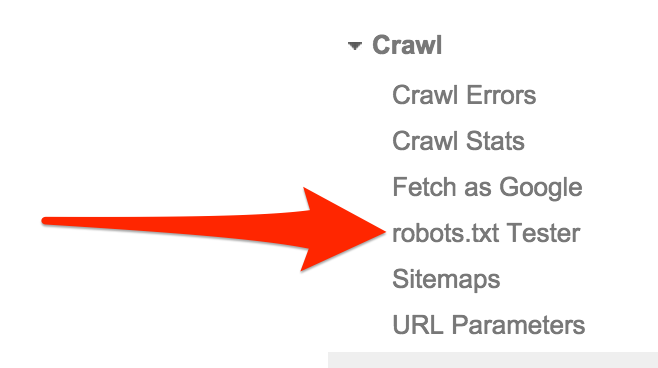

Upload this to the ROOT of your server directory. This should be in the “public_html” folder. You’ll notice that this file is nearly blank. This is intentional. To see a different version, with some blocked content see LoDo Web’s  Another way is to setup up

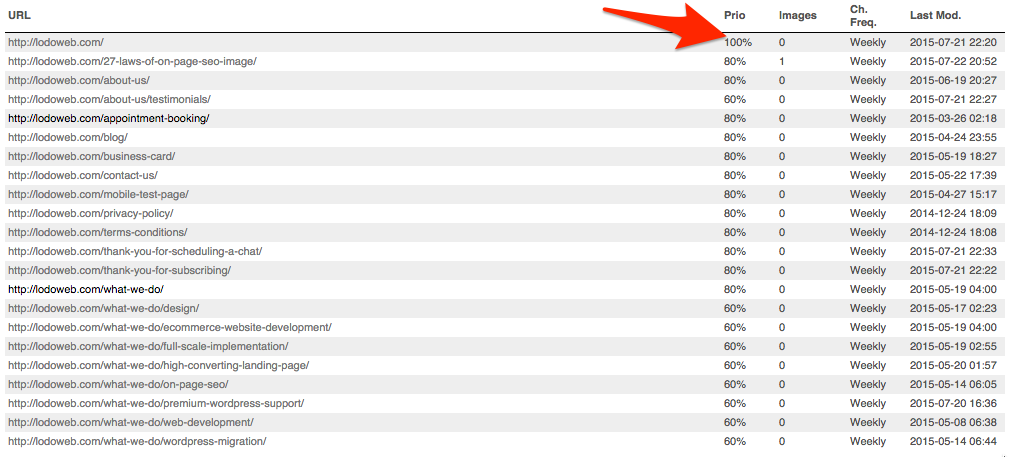

Another way is to setup up  Without a sitemap, crawlers are effectively navigating your site without any guidance or maps. There is no way for them to know where they are on your site and what the index priority is of each page. This indicates hierarchy.

Without a sitemap, crawlers are effectively navigating your site without any guidance or maps. There is no way for them to know where they are on your site and what the index priority is of each page. This indicates hierarchy. Google has even taken the liberty of releasing a

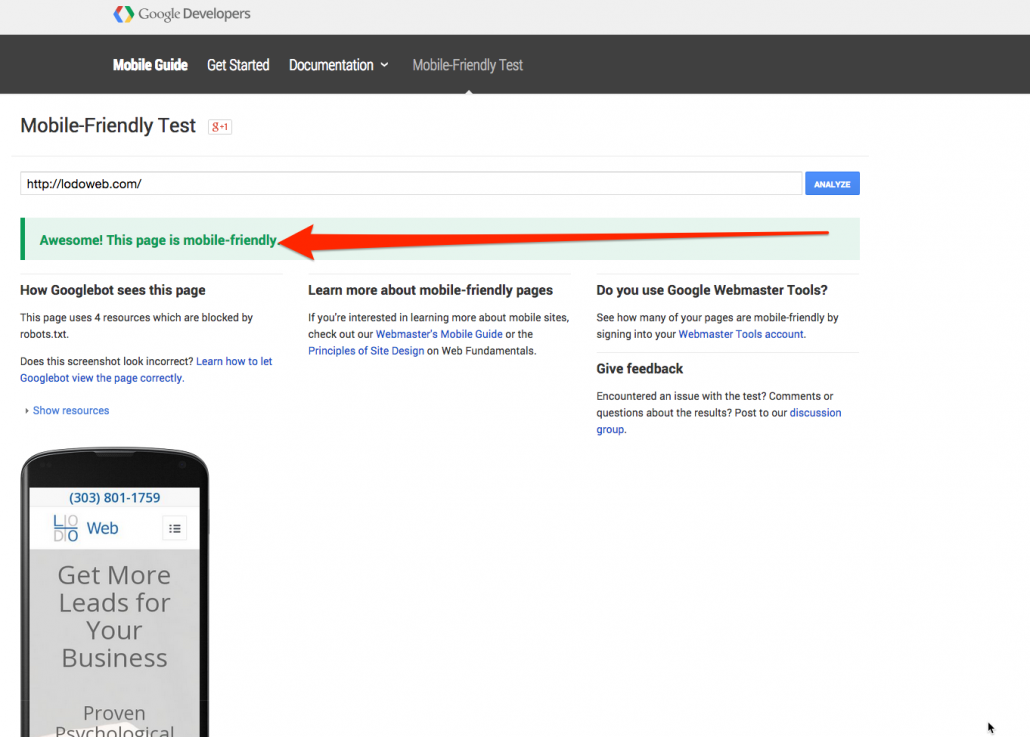

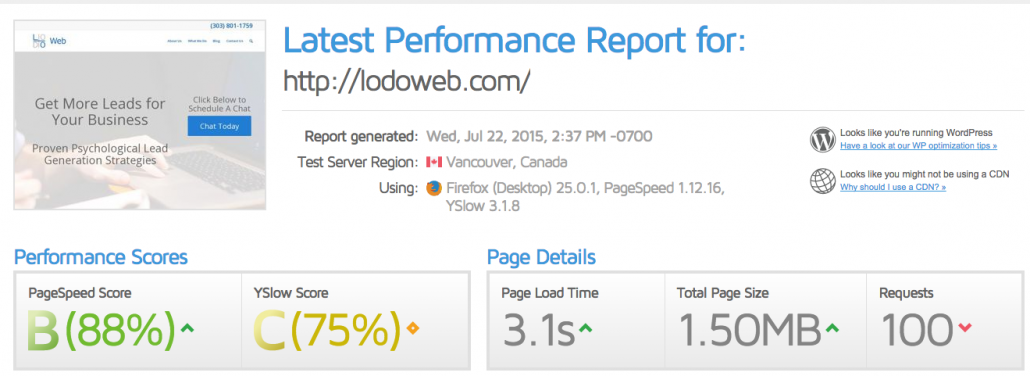

Google has even taken the liberty of releasing a  In accordance with responsive design, the page speed load time is also an important consideration for rank. The ages of 56K modems, where you were accustomed to wait about 30 seconds to load a flat HTML page, are gone. Thankfully. It should take seconds to load your site in Google.

In accordance with responsive design, the page speed load time is also an important consideration for rank. The ages of 56K modems, where you were accustomed to wait about 30 seconds to load a flat HTML page, are gone. Thankfully. It should take seconds to load your site in Google. Now, the root domain doesn’t have a whole lot of

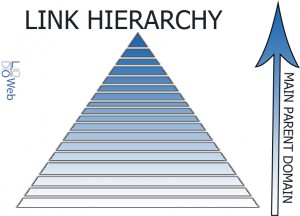

Now, the root domain doesn’t have a whole lot of Have you ever visit a site and admire how organized everything is? How every page fits into the other one and it flows quite nicely? This is because of an organized permalink structure. The permalink structure, link structure, or link schema represents the relationship of all your links as compared to the sub pages before them. This goes all the way up to the home page. This has an indication of the overall structure and the actual hierarchical relationship of your links compared to the rest of the links on the site. Now, this is isn’t internal linking, but more so the structured organization of your site. All sites should maintain a hierarchy from the home page and then the parent page and the child page and so on and so on.

Have you ever visit a site and admire how organized everything is? How every page fits into the other one and it flows quite nicely? This is because of an organized permalink structure. The permalink structure, link structure, or link schema represents the relationship of all your links as compared to the sub pages before them. This goes all the way up to the home page. This has an indication of the overall structure and the actual hierarchical relationship of your links compared to the rest of the links on the site. Now, this is isn’t internal linking, but more so the structured organization of your site. All sites should maintain a hierarchy from the home page and then the parent page and the child page and so on and so on. Who’s getting hungry? Who knew we would be talking about food in an article about SEO?

Who’s getting hungry? Who knew we would be talking about food in an article about SEO? not suggesting to start using all of them. You should certainly have accounts with the main ones, but not all of them. It is a business and personal decision which accounts to have.

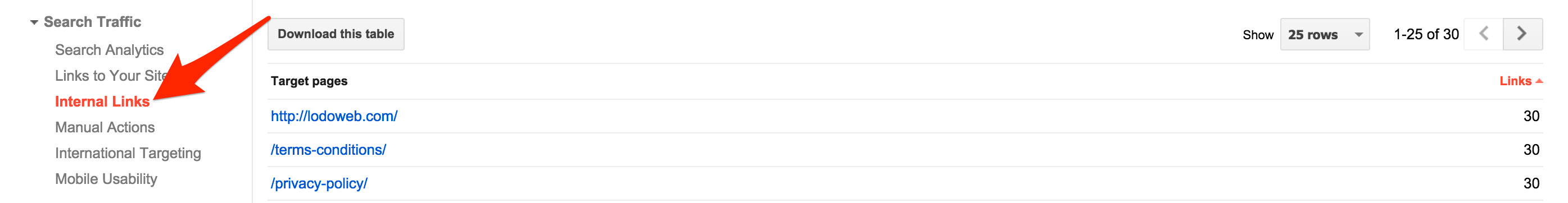

not suggesting to start using all of them. You should certainly have accounts with the main ones, but not all of them. It is a business and personal decision which accounts to have. Again, Google has found this important enough to include it in their webmaster tools. This is clear that they find it important enough and you should too. If you ever notice any successful blogs or site, they are always referencing previous posts or pages that already exist on their site. Likewise, each additional link to the site shows the level of importance to the link referenced. Eventually, if you break out your site into the whats linking to what, you’ll see it’ll resemble a web. This web is how search engines can gauge the level of perceived internal authority of individual pages on your site.

Again, Google has found this important enough to include it in their webmaster tools. This is clear that they find it important enough and you should too. If you ever notice any successful blogs or site, they are always referencing previous posts or pages that already exist on their site. Likewise, each additional link to the site shows the level of importance to the link referenced. Eventually, if you break out your site into the whats linking to what, you’ll see it’ll resemble a web. This web is how search engines can gauge the level of perceived internal authority of individual pages on your site.

What is the Difference Between Black Hat White Hat and Grey Hat?

What is the Difference Between Black Hat White Hat and Grey Hat?